1 min read

7 Keys to Unlocking a Devops Culture: Introducing the vRad Radiology Platform

At vRad, we have a passion for connecting – with each other, with clients, and with patients. In technology, we strive to break down the language...

Remote radiologist jobs with flexible schedules, equitable pay, and the most advanced reading platform. Discover teleradiology at vRad.

Radiologist well-being matters. Explore how vRad takes action to prevent burnout with expert-led, confidential support through our partnership with VITAL WorkLife. Helping radiologists thrive.

Visit the vRad Blog for radiologist experiences at vRad, career resources, and more.

vRad provides radiology residents and fellows free radiology education resources for ABR boards, noon lectures, and CME.

Teleradiology services leader since 2001. See how vRad AI is helping deliver faster, higher-quality care for 50,000+ critical patients each year.

Subspecialist care for the women in your community. 48-hour screenings. 1-hour diagnostics. Comprehensive compliance and inspection support.

vRad’s stroke protocol auto-assigns stroke cases to the top of all available radiologists’ worklists, with requirements to be read next.

vRad’s unique teleradiology workflow for trauma studies delivers consistently fast turnaround times—even during periods of high volume.

vRad’s Operations Center is the central hub that ensures imaging studies and communications are handled efficiently and swiftly.

vRad is delivering faster radiology turnaround times for 40,000+ critical patients annually, using four unique strategies, including AI.

.jpg?width=1024&height=576&name=vRad-High-Quality-Patient-Care-1024x576%20(1).jpg)

vRad is developing and using AI to improve radiology quality assurance and reduce medical malpractice risk.

Now you can power your practice with the same fully integrated technology and support ecosystem we use. The vRad Platform.

Since developing and launching our first model in 2015, vRad has been at the forefront of AI in radiology.

Since 2010, vRad Radiology Education has provided high-quality radiology CME. Open to all radiologists, these 15-minute online modules are a convenient way to stay up to date on practical radiology topics.

Join vRad’s annual spring CME conference featuring top speakers and practical radiology topics.

vRad provides radiology residents and fellows free radiology education resources for ABR boards, noon lectures, and CME.

Academically oriented radiologists love practicing at vRad too. Check out the research published by vRad radiologists and team members.

Learn how vRad revolutionized radiology and has been at the forefront of innovation since 2001.

%20(2).jpg?width=1008&height=755&name=Copy%20of%20Mega%20Nav%20Images%202025%20(1008%20x%20755%20px)%20(2).jpg)

Visit the vRad blog for radiologist experiences at vRad, career resources, and more.

Explore our practice’s reading platform, breast imaging program, AI, and more. Plus, hear from vRad radiologists about what it’s like to practice at vRad.

Ready to be part of something meaningful? Explore team member careers at vRad.

5 min read

Brian (Bobby) Baker

:

November 13, 2017

Brian (Bobby) Baker

:

November 13, 2017

Welcome back to the vRad technology quest as we unlock the 7th and final key!

As always, I recommend you check out the first post in the series if you’re interested in the topics I’ve covered thus far.

Today we’ll discuss how we perform scheduled maintenance on our highly available (24/7/365) platform and our process for minimizing or eliminating disruptions when short notice changes are necessary.

Let’s get to it.

The vRad technology platform is designed to be highly available.

|

High Availability System: application support server(s) that can be consistently accessed with minimal downtime. |

But even in a highly available platform, some changes require scheduled downtime. A significant amount of preparation and planning goes into all of our platform changes, but specifically ones that require system downtime. Because our platform is our link to clients and the patients we collectively serve, minimizing downtime is paramount.

Each month vRad deploys a new major version of our platform. Each of our 3 subsystems (Biz, PACS and RIS) deploys on a separate day. RIS is the only part of the platform that requires us to bring the system down for a short time (15 minutes). We utilize this planned maintenance window each month to also perform miscellaneous additional updates or enhancements.

As you might imagine, we spend quite a bit of time planning and preparing for these changes. We generally start planning 6 months in advance of any changes that require downtime. We work carefully to coincide these changes with our RIS releases to limit our downtime to a single 15-minute maintenance period each month.

We typically perform our RIS scheduled maintenance during a daytime window to lessen the impact to our clients and the patients we serve. As a teleradiology provider, we are primarily an “after-hours” solution which means our busiest times are in the middle of night, weekends and holidays. But nevertheless, a number of our clients our affected during that daytime window. We reduce disruption to those clients by:

By carefully planning, scheduling and communicating our downtime windows, we ensure consistent expectations for our team and our clients.

Usually, changes fall into existing maintenance windows and processes; since we plan 6 months out, there is plenty of time for adequate discussion and risk management in our weekly change management meetings. However, sometimes we don’t have the luxury of advanced planning.

Late last year, a network maintenance scenario arose that serves as an excellent example of how this process works at vRad, and demonstrates how seriously we take keeping our platform healthy and online.

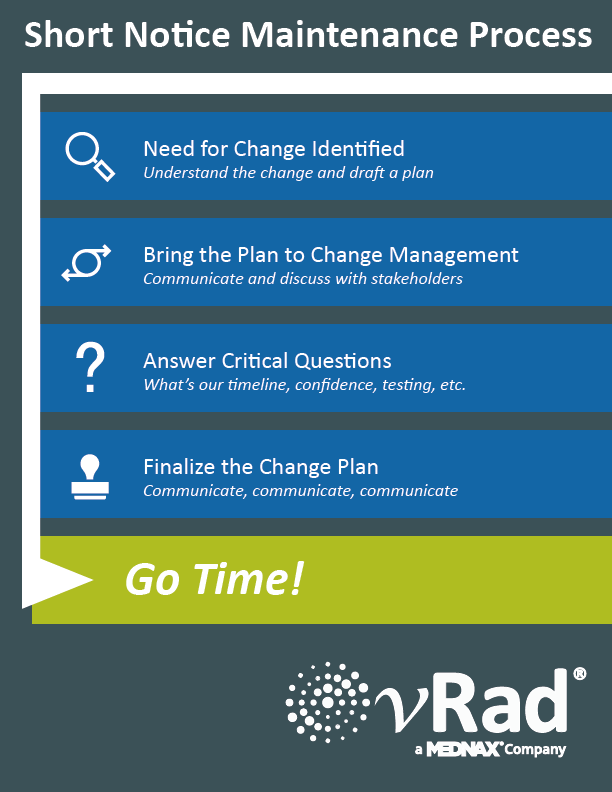

Before we dive into the story, I thought I’d share our short notice change playbook:

The change in our example started when Mark, one of our network engineers, stopped by my office. “I need to make a network change that has a small chance of a network blip,” Mark told me.

“What sort of change?”

“I need to connect the two firewalls to talk to each other about English Beans so that we can use them for coffee stirring later next year.” He said. (Ok, ok, he might have said something else, but the specifics of the change aren’t relevant for our purposes today.)

Mark and I discussed the change and the impact on the environment – we mapped everything out on my office whiteboard and scoured the downtime events calendar to sketch out a preliminary plan.

The possibility of a network blip constitutes a change we would ordinarily plan out months in advance. In this case, we did not have the luxury of knowing about the needed until Mark made the discovery. While we try to avoid these situations, the nature of technology’s rapid changes means they bubble up from time to time – and we must have the agility to address them. Mark and I both felt that we needed to push through this change as soon as we could.

The next step involved communicating and brainstorming. I emailed Shannon, then our CIO, Wade, our Sr. Director of Engineering, team members from Support, team members from Sales and Client Services, team members from Operations and Jodi, our Sr. Director of Marketing.

Did I mention we take these changes seriously?

“We’re going to be making a change to our networking infrastructure in our October RIS release,” I wrote, and proceeded to explain details and risk scenarios. “We can discuss this at our upcoming change management and engineering meetings this week.”

I hit Send and then forwarded the email, including a few more technical details to our engineering team to make sure they were informed.

That Thursday, our Change Management team – a cross-section of the business that discusses all changes to the platform and approves them – met and Mark and I presented the change and our preliminary plan.

These discussions are ripe for probing questions:

“How have we tested this?”

“How long will the platform be down?”

“If this goes wrong, what’s the worst case scenario?”

“What is your projected confidence?”

“Will this impact WebEx or telephones?”

“Could these cause interruptions in our VPN tunnels?”

“Will image ingestion be impacted?”

For each change to our platform, we make sure to ask each other tough questions. Networking changes can be particularly impactful if they don’t work as expected; however, after discussion, our networking team was confident that these changes were low risk and that the production impact would be nothing or at most, a very short blip.

We take a lot of pride in our up-time – many of us have had brothers, sisters, parents or friends who have been in medical emergency situations and we often think of them and know how important it was to have timely medical care. Interruptions to our platform are not simply disruption to business, but possibly disruption to patients in need of care. That matters to us, at a deep and personal level.

We planned for a worst case scenario with the change in question: 30 seconds without network connectivity; the risk profile was larger because these changes were to components that service a significant part of the platform.

We decided to include people beyond our Change Management team to finalize our plan – the same group that I had emailed notice to earlier. We discussed our plan, the current questions and our answers. We were going to be impacting remote team members with this change, as well as tools like WebEx used by Sales and Client Management team members, and potentially our VPNs, so communication was particularly important.

The group discussed and came to alignment:

We would send internal notifications 2 weeks before the release, a week before the release, and the day of the release. We would make the change alongside our normal comprehensive deployment plan for the RIS, adding 20 or so discrete steps required to perform the additional changes. Finally, Jodi helped us add notification information into our client notice for those impacted by the RIS release – letting them know we were doing additional changes that had the possibility of short interruptions, but the expected impact was minimal.

As the date of these changes approached, our engineering and project management teams ensured that all communications that were discussed went out according to plan. We re-tested the changes and reminded our engineering teams about the change – fielding additional questions and ensuring our plan was solid.

Wrapping up the process – and our story – the day of the change came. Our network team carefully orchestrated the updates and almost no connectivity downtime occurred; the entire process took less than 5 minutes from start to finish and our outage-time was immeasurably short. Another solid success.

While the finale of our story may seem anti-climactic, that’s intentional!

All the time spent planning is expressly for the purpose of ensuring business will continue as usual – sometimes an ordinary day can be an extraordinary feat.

It’s been a pleasure sharing with you how vRad does DevOps, from agile development, test automation – and everything in between. I hope you’ve enjoyed coming along for the ride.

If you’ve missed any of the 7 keys, I encourage you to check the vRad Technology Quest Log for some more interesting nuggets about vRad DevOps.

Thank you for taking the time to learn about DevOps at vRad. Our technology team is passionate about our platform – I welcome you to message me on LinkedIn if you have any questions or other topics that might interest you!

Farewell for now,

Brian (Bobby) Baker

1 min read

At vRad, we have a passion for connecting – with each other, with clients, and with patients. In technology, we strive to break down the language...

1 min read

Welcome back to the vRad Technology Quest Series. We’ve shared how vRad builds and deploys code (vRad Development Pipeline (#2)). This article is an...

1 min read

Welcome once again to the vRad Technology Quest series – let's dive in. Cyber Security is a hot topic. The frequency of notable events – ranging from...

vRad (Virtual Radiologic) is a national radiology practice combining clinical excellence with cutting-edge technology development. Each year, we bring exceptional radiology care to millions of patients and empower healthcare providers with technology-driven solutions.

Non-Clinical Inquiries (Total Free):

800.737.0610

Outside U.S.:

011.1.952.595.1111

3600 Minnesota Drive, Suite 800

Edina, MN 55435